The Macintosh Moment for AI Agents

AI agents can do the work, but most founders can't wire them up. The Macintosh solved this for computers. What solves it for agents?

Aidan Hornsby

@aidanhornsby

I'm terminally online.

Every day, my co-founders and I send each other dozens of links to cool AI workflows, frameworks, coding tools, and demos of agents doing things that felt impossible even two months ago.

We can describe exactly what we want agents to do at Toyo. We think about it all day, every day, yet we still feel like we can't build any of it fast enough.

If that's true for us, what hope is there for other founders who know AI should be transforming how they operate but can't figure out how to make it happen?

Something big is happening (but not for everyone)

Less than one week after Matt Shumer published his viral essay Something Big Is Happening it hit 80 million views.

The essay became a lightning rod. Developers and founders shared it as confirmation of what they're experiencing. Critics called it hype.

What was most interesting to me wasn't what Matt was saying or if he's right on the timelines. It was who was sharing it, and who wasn't.

In the communities I follow, technical early adopters have figured out how to get extraordinary leverage from AI. They're building custom internal tools in an afternoon, shipping in hours what took teams weeks less than a year ago. A single developer with Claude Code or Cursor can prototype a working application faster than most companies can write a requirements document. Some are doing it by voice, vibe coding while out for a walk. It feels insane.

Outside those communities, it's a different story. Many (but still, not even most) business owners have tried ChatGPT. Some use it daily, but the gap between "I chat with AI sometimes" and "I've restructured how my business operates around agents" is enormous. Most don't have a shred of technical aptitude (let alone a team of engineers), or time to even learn the difference between GPT-5.2, Claude Opus 4.6, and Gemini, let alone how to orchestrate OpenClaw agents to triage their email and manage their calendars.

They're just trying to make payroll and serve their customers.

Agents are still in the command line era

In a recent Stratechery post about Microsoft, Ben Thompson said:

"AI writing code works because it's a nearly perfect match of probabilistic inputs and deterministic outputs: the code needs to actually run, and that running code can be tested and debugged."

On his Sharp Tech podcast, Ben and co-host Andrew Sharp explored the other side of the coin: many real-world knowledge work jobs are more nuanced than coding. Sales calls, vendor negotiations, employee management, customer relationships: the more a role depends on human judgment, the harder it is to hand to a machine (for now, at least).

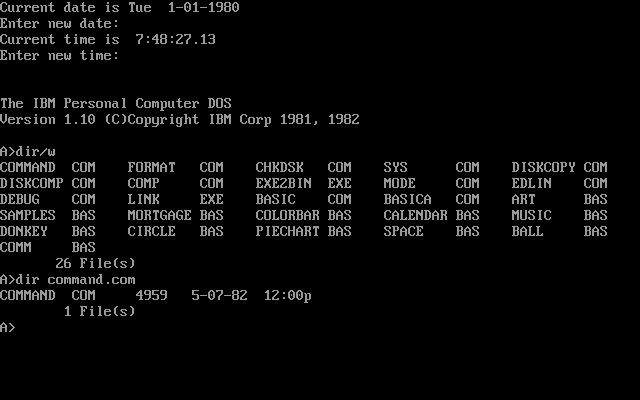

If you're following this stuff closely, it's clear that we're still living in the command line era of AI agents: they're powerful, but only for people who already speak the language.

But, it's also true that this feels like the moment that is changing, fast.

How do you reach everyone? Do less

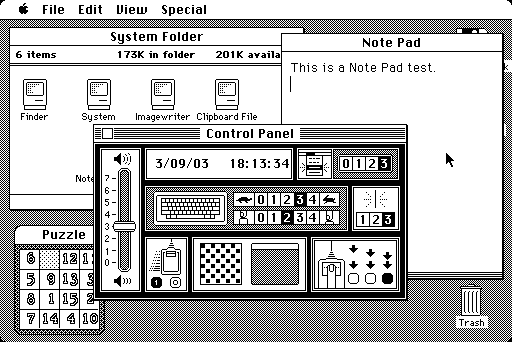

In 1984, Steve Jobs introduced the world to the Macintosh: a personal computer that brought the graphical user interface to the masses.

On paper, the IBM PCs of the era were technically more powerful. But they required users to know their way around the command line to do anything useful with them.

The Mac could do less. But what it could do, anyone could do.

'Normal' people with no technical aptitude started pointing, clicking, and creating. The GUI ended up being the gateway drug that onboarded billions of people to computing, and the rest is history.

OpenClaw isn't 1984

The explosive growth of OpenClaw is giving us a glimpse of what's possible: personalized AI agents automating everything from email to building applications from your phone.

But, it's a power-user tool with huge security risks for many people taking a YOLO approach. One of the project's own maintainers said:

"If you can't understand how to run a command line, this is far too dangerous of a project for you to use safely."

Of course, that hasn't stopped people diving in headfirst. The demos people are sharing of agents booking flights, building apps, and managing inboxes are worth the attention they're getting. OpenClaw is a preview of the future, but it isn't the Macintosh moment for AI Agents.

It's becoming increasingly clear that AI can already do a lot of knowledge work for us. The question now is: who gets to use it? What's missing is the right interface to put these capabilities within reach of the 400 million+ businesses around the world who don't employ software engineers.

For many founders and leaders, finding ways to make better use of AI in their business is starting to feel like more than a 'nice to have.' They're right: the gap between businesses that figure this out and those that don't will be existential.

Cockpits vs cars

Since OpenClaw exploded, there has been a rush of products promising agentic AI for everyone. Most of the products showing up today are still built by technical people for technical people. Use them and you'll see: they all feel like the cockpit of a 747. They assume you already understand what an agent is, what a workflow looks like, what an API does, etc.

Normal people don't know how to fly a plane, but they can drive a car. Soleio, a deservedly respected design leader in Silicon Valley, articulated it well:

The difference between a cockpit and a car dashboard is selection: knowing what matters, having the confidence to cut everything else, and making the result feel obvious, not oversimplified.

The technical products that achieve widespread adoption abstract the complexity while keeping the capabilities intact:

- The original Macintosh hid the command line behind point-and-click.

- Google reduced the entire internet to a single text box when Yahoo offered a directory.

- The iPod put 1,000 songs behind a scroll wheel when existing MP3 players needed a manual.

- iPhone stripped away keyboards and indirect interaction mechanisms to put internet in our pockets.

- Stripe made it ludicrously easy to accept payments without months of merchant account paperwork. All these products (and many more) did less on the surface so that more people could use them.

Hide the wiring, show the outcome. For AI agents, that means letting someone describe what they need in plain language and having agents figure out how to deliver it. This is as much a design and UX problem as it is a technical one.

Today, taste is still what separates the products that will work for normal people from the ones that won't. There's a lively debate about taste happening in the startup community right now (What is it, actually? What's it worth? Can AI learn it? Does it even matter?)

Wherever the vibe around AI and taste lands this week, Jason Fried's point is more fundamental. Whether and how quickly AI develops its own taste is a fascinating question, but until then opinionated product design still matters.

We're striving to take this approach with how we design Toyo. Cockpit tools help technical teams move faster. We want to help everyone else move at all: say what needs to happen, and agents figure out how to do it.

If you've been thinking "I want all of this leverage, but I don't know how to get it, and I don't have the time to learn," we're building for you.

The Macintosh moment for AI agents is coming. We intend to ship it.